● 합성곱 신경망 구현

1. 합성곱 층(Convolution Layer)

# 이미지를 column으로 바꿔주는 함수

def im2col(input_data, filter_h, filter_w, stride = 1, pad = 0):

N, C, H, W = input_data.shape

out_h = (H + 2* pad - filter_h) // stride + 1

out_w = (W + 2* pad - filter_w) // stride + 1

img = np.pad(input_data, [(0, 0), (0, 0), (pad, pad), (pad, pad)], 'constant')

col = np.zeros((N, C, filter_h, filter_w, out_h, out_w))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

col[:, :, y, x, :, :] = img[:, :, y:y_max:stride, x:x_max:stride]

# reshape 해줌으로써 flatten된 결과가 나옴

col = col.transpose(0, 4, 5, 1, 2, 3).reshape(N * out_h * out_w, -1)

return col

# column을 이미지로 바꿔주는 함수

def col2im(col, input_shape, filter_h, filter_w, stride = 1, pad = 0):

N, C, H, W = input_shape

out_h = (H + 2* pad - filter_h) // stride + 1

out_w = (W + 2* pad - filter_w) // stride + 1

col = col.reshape(N, out_h, out_w, C, filter_h, filter_w).transpose(0, 3, 4, 5, 1, 2)

img = np.zeros((N, C, H + 2 * pad + stride - 1, W + 2 * pad + stride - 1))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

img[:, :, y:y_max:stride, x:x_max:stride] += col[:, :, y, x, :, :]

return img[:, :, pad:H + pad, pad:W + pad]# 2차원 합성곱 연산 클래스

class Conv2D:

def __init__(self, W, b, stride = 1, pad = 0):

self.W = W

self.b = b

self.stride = stride

self.pad = pad

self.input_data = None

self.col = None

self.col_W = None

self.dW = None

self.db = None

def forward(self, input_data):

FN, C, FH, FW = self.W.shape

N, C, H, W = input_data.shape

out_h = (H + 2 * self.pad - FH) // self.stride + 1

out_w = (W + 2 * self.pad - FW) // self.stride + 1

col = im2col(input_data, FH, FW, self.stride, self.pad)

col_W = self.W.reshape(FN, -1).T

out = np.dot(col, col_W) + self.b

output = out.reshape(N, out_h, out_w, -1).transpose(0, 3, 1, 2)

self.input_data = input_data

self.col = col

self.col_W = col_W

return output

def backward(self, dout):

FN, C, FH, FW = self.W.shape

dout = dout.reshape(0, 2, 3, 1).reshape(-1, FN)

self.db = np.sum(dout, axis = 0)

self.dW = np.dot(self.col.T, dout)

self.dW = self.dW.transpose(1, 0).reshape(FN, C, FH, FW)

dcol = np.dot(dout, self.col_W.T)

dx = col2im(dcol, self.input_data.shape, FH, FW, self.stride, self.pad)

return dx

- 컨볼루션 레이어 테스트

def init_weight(num_filters, data_dim, kernel_size, stride = 1, pad = 0, weight_std = 0.01):

weights = weight_std * np.random.randn(num_filters, data_dim, kernel_size, kernel_size)

biases = np.zeros(num_filters)

return weights, biases# 기본 이미지 출력

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_gray = url_to_image(img_url, gray = True)

image_gray = image_gray.reshape(image_gray.shape[0], -1, 1)

print("image.shape:", image_gray.shape)

image_gray = np.expand_dims(image_gray.transpose(2, 0, 1), axis = 0)

plt.imshow(image_gray[0, 0, :, :], cmap = 'gray')

plt.show()

# 가중치와 편향주어 합성곱 연산 (1)

W, b = init_weight(1, 1, 3)

conv = Conv2D(W, b)

output = conv.forward(image_gray)

print("Conv Layer size:", output.shape)

# 출력 결과

Conv Layer size: (1, 1, 438, 438)

plt.imshow(output[0, 0, :, :], cmap = 'gray')

plt.show()

# 가중치와 편향주어 합성곱 연산 (2)

W2, b2 = init_weight(1, 1, 3, stride = 2)

conv2 = Conv2D(W2, b2, stride = 2)

output2 = conv2.forward(image_gray)

print("Conv Layer size:", output2.shape)

# 출력 결과

Conv Layer size: (1, 1, 219, 219)

plt.imshow(output2[0, 0, :, :], cmap = 'gray')

plt.show()

# 컬러 이미지로 출력

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_color = url_to_image(img_url)

print("image.shape:", image_color.shape)

plt.imshow(image_color)

plt.show()

image_color = np.expand_dims(image_color.transpose(2, 0, 1), axis = 0)

print("image.shape:", image_color.shape)

# 가중치와 편향주어 합성곱 연산 (3)

W3, b3 = init_weight(10, 3, 3)

conv3 = Conv2D(W3, b3)

output3 = conv3.forward(image_color)

print("Conv Layer size:", output3.shape)

# 출력 결과

Conv Layer size: (1, 10, 438, 438)

plt.imshow(output3[0, 3, :, :], cmap = "gray")

plt.show()

plt.imshow(output3[0, 8, :, :], cmap = "gray")

plt.show()

- 동일한 이미지 여러 장 테스트(배치 처리)

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_gray = url_to_image(img_url, gray = True)

image_gray = image_gray.reshape(image_gray.shape[0], -1, 1)

print("image.shape:", image_gray.shape)

image_gray = image_gray.transpose(2, 0, 1)

print("image_gray.shape", image_gray.shape)

# 출력 결과

image.shape: (440, 440, 1)

image_gray.shape (1, 440, 440)batch_image_gray = np.repeat(image_gray[np.newaxis, :, :, :], 15, axis = 0)

print(batch_image_gray.shape)

# 출력 결과

(15, 1, 440, 440)W4, b4 = init_weight(10, 1, 3, stride = 2)

conv4 = Conv2D(W4, b4)

output4 = conv4.forward(batch_image_gray)

print("Conv Layer size:", output4.shape)

# 출력 결과

Conv Layer size: (15, 10, 438, 438)

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Filter 3")

plt.imshow(output4[3, 2, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Filter 6")

plt.imshow(output4[3, 5, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Filter 10")

plt.imshow(output4[3, 9, :, :], cmap = 'gray')

plt.show()

# color 이미지에 대해

W5, b5 = init_weight(32, 3, 3, stride = 3)

conv5 = Conv2D(W5, b5, stride = 3)

output5 = conv5.forward(image_color)

print("Conv Layer size:", output5.shape)

# 출력 결과

Conv Layer size: (1, 32, 146, 146)

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Filter 21")

plt.imshow(output5[0, 20, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Filter 15")

plt.imshow(output5[0, 14, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Filter 11")

plt.imshow(output5[0, 10, :, :], cmap = 'gray')

plt.show()

- 동일한 이미지 배치 처리(color)

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_color = url_to_image(img_url)

print("image.shape:", image_color.shape)

image_color = image_color.transpose(2, 0, 1)

print("image.shape:", image_color.shape)

# 출력 결과

image.shape: (440, 440, 3)

image.shape: (3, 440, 440)batch_image_color = np.repeat(image_color[np.newaxis, :, :, :], 15, axis = 0)

print(batch_image_color.shape)

# 출력 결과

(15, 3, 440, 440)W6, b6 = init_weight(64, 3, 5)

conv6 = Conv2D(W6, b6)

output6 = conv6.forward(batch_image_color)

print("Conv Layer size:", output6.shape)

# 출력 결과

Conv Layer size: (15, 64, 436, 436)plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Filter 50")

plt.imshow(output6[10, 49, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Filter 31")

plt.imshow(output6[10, 30, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Filter 1")

plt.imshow(output6[10, 0, :, :], cmap = 'gray')

plt.show()

2. 풀링 층(Pooling Layer)

class Pooling2D:

def __init__(self, kernel_size = 2, stride = 1, pad = 0):

self.kernel_size = kernel_size

self.stride = stride

self.pad = pad

self.input_data = None

self.arg_max = None

def forward(self, input_data):

N, C, H, W = input_data.shape

out_h = (H - self.kernel_size) // self.stride + 1

out_w = (W - self.kernel_size) // self.stride + 1

col = im2col(input_data, self.kernel_size, self.kernel_size, self.stride, self.pad)

col = col.reshape(-1, self.kernel_size * self.kernel_size)

arg_max = np.argmax(col, axis = 1)

out = np.max(col, axis = 1)

output = out.reshape(N, out_h, out_w, C).transpose(0, 3, 1, 2)

self.input_data = input_data

self.arg_max = arg_max

return output

def backward(self, dout):

dout = dout.transpose(0, 2, 3, 1)

pool_size = self.kernel_size * self.kernel_size

dmax = np.zeros((dout.size, pool_size))

dmax[np.arange(self.arg_max.size), self.arg_max.flatten()] = dout.flatten()

dmax = dmax.reshape(dout.shape + (pool_size,))

dcol = dmax.reshape(dmax.shape[0] * dmax.shape[1] * dmax.shape[2], -1)

dx = col2im(dcol, self.input_data.shape, self.kernel_size, self.kernel_size, self.stride, self.pad)

return dx

- 풀링 레이어 테스트

- 2차원 이미지

- (Height, Width, 1)

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_gray = url_to_image(img_url, gray = True)

image_gray = image_gray.reshape(image_gray.shape[0], -1, 1)

print("image.shape:", image_gray.shape)

# 출력 결과

image.shape: (440, 440, 1)

image_gray = np.expand_dims(image_gray.transpose(2, 0, 1), axis = 0)

plt.imshow(image_gray[0, 0, :, :], cmap = "gray")

plt.show()

W, b = init_weight(8, 1, 3)

conv = Conv2D(W, b)

pool = Pooling2D(stride = 2, kernel_size = 2)

output1 = conv.forward(image_gray)

print("Conv size:", output1.shape)

output1 = pool.forward(output1)

print("Pooling Layer size:", output1.shape)

# 출력 결과

Conv size: (1, 8, 438, 438)

Pooling Layer size: (1, 8, 219, 219)# Max Pooling을 거친 결과를 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 8")

plt.imshow(output1[0, 7, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 4")

plt.imshow(output1[0, 3, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 1")

plt.imshow(output1[0, 0, :, :], cmap = 'gray')

plt.show()

# 예시 2(가중치 W와 편향 b를 바꿔서)

W2, b2 = init_weight(32, 1, 3, stride = 2)

conv2 = Conv2D(W2, b2)

pool = Pooling2D(stride = 2, kernel_size = 2)

output2 = conv2.forward(image_gray)

print("Conv size:", output1.shape)

output2 = pool.forward(output2)

print("Pooling Layer size:", output2.shape)

# 출력 결과

Conv size: (1, 8, 219, 219)

Pooling Layer size: (1, 32, 219, 219)

# 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 8")

plt.imshow(output2[0, 7, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 4")

plt.imshow(output2[0, 3, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 1")

plt.imshow(output2[0, 0, :, :], cmap = 'gray')

plt.show()

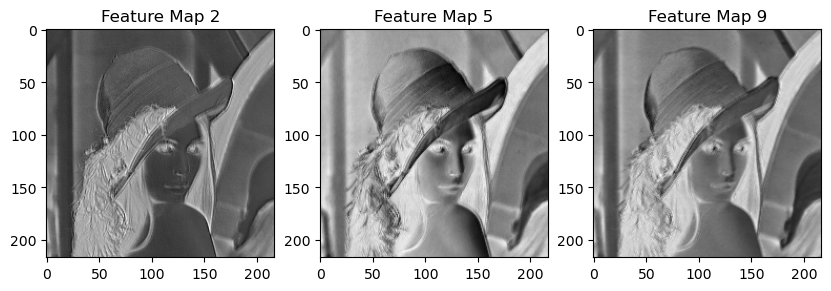

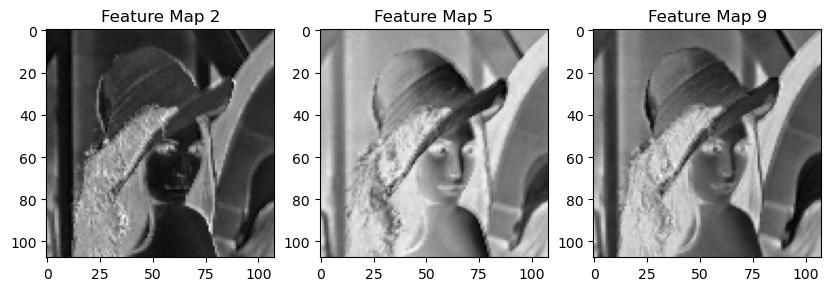

- 동일한 이미지 배치 처리

- Color Image

- conv → maxpooling → conv → maxpooling

- 시각화 과정

- 5번째 이미지

- [2, 5, 9] 필터를 통해 확인

img_url = "https://upload.wikimedia.org/wikipedia/ko/thumb/2/24/Lenna.png/440px-Lenna.png"

image_color = url_to_image(img_url)

print("image.shape:", image_color.shape)

# 출력 결과

image.shape: (440, 440, 3)

plt.imshow(image_color)

plt.show()

image_color = image_color.transpose(2, 0, 1)

print("image.shape:", image_color.shape)

# 출력 결과

image.shape: (3, 440, 440)

# 15개의 배치로 만들어주기

batch_image_color = np.repeat(image_color[np.newaxis, :, :, :], 15, axis = 0)

print(batch_image_color.shape)

# 출력 결과

(15, 3, 440, 440)W, b = init_weight(10, 3, 3)

conv1 = Conv2D(W, b)

pool = Pooling2D(stride = 2, kernel_size = 2)

# 합성곱 연산만한 결과

output1 = conv1.forward(batch_image_color)

print(output1.shape)

# 출력 결과

(15, 10, 438, 438)

# 합성곱 연산만한 결과 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 2")

plt.imshow(output1[4, 1, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 5")

plt.imshow(output1[4, 4, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 9")

plt.imshow(output1[4, 8, :, :], cmap = 'gray')

plt.show()

# Pooling까지 한 결과

output1 = pool.forward(output1)

print(output1.shape)

# 출력 결과

(15, 10, 219, 219)

# Pooling까지 한 결과 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 2")

plt.imshow(output1[4, 1, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 5")

plt.imshow(output1[4, 4, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 9")

plt.imshow(output1[4, 8, :, :], cmap = 'gray')

plt.show()

# 예시 2, 가중치 변경

W2, b2 = init_weight(30, 10, 3)

conv2 = Conv2D(W2, b2)

pool = Pooling2D(stride = 2, kernel_size = 2)

# 합성곱 연산만 한 결과

output2 = conv2.forward(output1)

print(output2.shape)

# 출력 결과

(15, 30, 217, 217)

# 합성곱 연산만한 결과 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 2")

plt.imshow(output2[4, 1, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 5")

plt.imshow(output2[4, 4, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 9")

plt.imshow(output2[4, 8, :, :], cmap = 'gray')

plt.show()

# Pooling까지 한 결과

output2 = pool.forward(output2)

print(output2.shape)

# 출력 결과

(15, 30, 108, 108)

# Pooling까지 한 결과 시각화

plt.figure(figsize = (10, 10))

plt.subplot(1, 3, 1)

plt.title("Feature Map 2")

plt.imshow(output2[4, 1, :, :], cmap = 'gray')

plt.subplot(1, 3, 2)

plt.title("Feature Map 5")

plt.imshow(output2[4, 4, :, :], cmap = 'gray')

plt.subplot(1, 3, 3)

plt.title("Feature Map 9")

plt.imshow(output2[4, 8, :, :], cmap = 'gray')

plt.show()

3. 대표적인 CNN 모델 소개

- LeNet - 5

- AlexNet

- 활성화 함수로 ReLU 사용

- 국소적 정규화(Local Response Normalization, LRN) 실시하는 계층 사용

- 드롭아웃

- VGG - 16

- 모든 컨볼루션 레이어에서의 필터(커널) 사이즈를 3×3으로 설정

- 2×2 MaxPooling

- 필터의 개수는 Conv Block을 지나가면서 2배씩 증가

32 → 64 → 128

4. CNN 학습 구현 - MNIST

- modules import

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

from collections import OrderedDict- Util Functions

def im2col(input_data, filter_h, filter_w, stride = 1, pad = 0):

N, C, H, W = input_data.shape

out_h = (H + 2* pad - filter_h) // stride + 1

out_w = (W + 2* pad - filter_w) // stride + 1

img = np.pad(input_data, [(0, 0), (0, 0), (pad, pad), (pad, pad)], 'constant')

col = np.zeros((N, C, filter_h, filter_w, out_h, out_w))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

col[:, :, y, x, :, :] = img[:, :, y:y_max:stride, x:x_max:stride]

# reshape 해줌으로써 flatten된 결과가 나옴

col = col.transpose(0, 4, 5, 1, 2, 3).reshape(N * out_h * out_w, -1)

return col

def col2im(col, input_shape, filter_h, filter_w, stride = 1, pad = 0):

N, C, H, W = input_shape

out_h = (H + 2* pad - filter_h) // stride + 1

out_w = (W + 2* pad - filter_w) // stride + 1

col = col.reshape(N, out_h, out_w, C, filter_h, filter_w).transpose(0, 3, 4, 5, 1, 2)

img = np.zeros((N, C, H + 2 * pad + stride - 1, W + 2 * pad + stride - 1))

for y in range(filter_h):

y_max = y + stride * out_h

for x in range(filter_w):

x_max = x + stride * out_w

img[:, :, y:y_max:stride, x:x_max:stride] += col[:, :, y, x, :, :]

return img[:, :, pad:H + pad, pad:W + pad]

def softmax(x):

if x.ndim == 2:

x = x.T

x = x - np.max(x, axis = 0)

y = np.exp(x) / np.sum(np.exp(x), axis = 0)

return y.T

x = x - np.max(x)

return np.exp(x) / np.sum(np.exp(x))

def mean_squared_error(pred_y, true_y):

return 0.5 * np.sum((pred_y - true_y)**2)

def cross_entropy_error(pred_y, true_y):

if pred_y.ndim == 1:

true_y = true_y.reshape(1, true_y.size)

pred_y = pred_y.reshape(1, pred_y.size)

if true_y.size == pred_y.size:

true_y = true_y.argmax(axis = 1)

batch_size = pred_y.shape[0]

return -np.sum(np.log(pred_y[np.arange(batch_size), true_y] + 1e-7)) / batch_size

def softmax_loss(X, true_y):

pred_y = softmax(X)

return cross_entropy_error(pred_y, true_y)- Util Classes

class ReLU:

def __init__(self):

self.mask = None

def forward(self, x):

self.mask = (x <= 0)

out = x.copy()

out[self.mask] = 0

return out

def backward(self, dout):

dout[self.mask] = 0

dx = dout

return dx

class Sigmoid:

def __init__(self):

self.out = None

def forward(self, x):

out = sigmoid(x)

self.out = out

return out

def backward(self, dout):

dx = dout * (1.0 - self.out) * self.out

return dx

class Layer:

def __init__(self, W, b):

self.W = W

self.b = b

self.input_data = None

self.input_data_shape = None

self.dW = None

self.db = None

def forward(self, input_data):

self.input_data_shape = input_data.shape

input_data = input_data.reshape(input_data.shape[0], -1)

self.input_data = input_data

out = np.dot(self.input_data, self.W) + self.b

return out

def backward(self, dout):

dx = np.dot(dout, self.W.T)

self.dW = np.dot(self.input_data.T, dout)

self.db = np.sum(dout,axis = 0)

dx = dx.reshape(*self.input_data_shape)

return dx

class Softmax:

def __init__(self):

self.loss = None

self.y = None

self.t = None

def forward(self, x, t):

self.t = t

self.y = softmax(x)

self.loss = cross_entropy_error(self.y, self.t)

return self.loss

def backward(self, dout = 1):

batch_size = self.t.shape[0]

if self.t.size == self.y.size:

dx = (self.y - self.t) / batch_size

else:

dx = self.y.copy()

dx[np.arange(batch_size), self.t] -= 1

dx = dx /batch_size

return dx

class SGD:

def __init__(self, learning_rate = 0.01):

self.learning_rate = learning_rate

def update(self, params, grads):

for key in params.keys():

params[key] -= self.learning_rate * grads[key]- 데이터 로드

np.random.seed(42)

mnist = tf.keras.datasets.mnist

(x_train, t_train), (x_test, t_test) = mnist.load_data()

num_classes = 10

print(x_train.shape)

print(t_train.shape)

print(x_test.shape)

print(t_test.shape)

# 출력 결과

(60000, 28, 28)

(60000,)

(10000, 28, 28)

(10000,)

# 차원 늘이기

x_train, x_test = np.expand_dims(x_train, axis = 1), np.expand_dims(x_test, axis = 1)

print(x_train.shape)

print(t_train.shape)

print(x_test.shape)

print(t_test.shape)

# 출력 결과

(60000, 1, 28, 28)

(60000,)

(10000, 1, 28, 28)

(10000,)

# 데이터 수 줄이기

x_train = x_train[:3000]

x_test = x_test[:500]

t_train = t_train[:3000]

t_test = t_test[:500]

print(x_train.shape)

print(t_train.shape)

print(x_test.shape)

print(t_test.shape)

# 출력 결과

(3000, 1, 28, 28)

(3000,)

(500, 1, 28, 28)

(500,)- Build Model

class MyModel:

def __init__(self, input_dim = (1, 28, 28), num_outputs = 10):

conv1_block = {'num_filters': 30,

'kernel_size': 3,

'stride': 1,

'pad': 0}

input_size = input_dim[1]

conv_output_size = ((input_size - conv1_block['kernel_size'] + 2 * conv1_block['pad']) // conv1_block['stride']) + 1

pool_output_size = int(conv1_block['num_filters'] * (conv_output_size / 2) * (conv_output_size / 2))

self.params = {}

self.params['W1'], self.params['b1'] = self.__init_weight_conv(conv1_block['num_filters'], input_dim[0], 3)

self.params['W2'], self.params['b2'] = self.__init_weight_fc(pool_output_size, 256)

self.params['W3'], self.params['b3'] = self.__init_weight_fc(256, 10)

self.layers = OrderedDict()

self.layers['Conv1'] = Conv2D(self.params['W1'], self.params['b1'])

self.layers['ReLU1'] = ReLU()

self.layers['Pool1'] = Pooling2D(kernel_size = 2, stride = 2)

self.layers['FC1'] = Layer(self.params['W2'], self.params['b2'])

self.layers['ReLU'] = ReLU()

self.layers['FC2'] = Layer(self.params['W3'], self.params['b3'])

self.last_layer = Softmax()

def __init_weight_conv(self, num_filters, data_dim, kernel_size, stride = 1, pad = 0, weight_std = 0.01):

weights = weight_std * np.random.randn(num_filters, data_dim, kernel_size, kernel_size)

biases = np.zeros(num_filters)

return weights, biases

def __init_weight_fc(self, num_inputs, num_outputs, weight_std = 0.01):

weights = weight_std * np.random.randn(num_inputs, num_outputs)

biases = np.zeros(num_outputs)

return weights, biases

def forward(self, x):

for layer in self.layers.values():

x = layer.forward(x)

return x

def loss(self, x, true_y):

pred_y = self.forward(x)

# last layer인 softmax 결과를 반환

return self.last_layer.forward(pred_y, true_y)

def accuracy(self, x, true_y, batch_size = 100):

if true_y.ndim != 1:

true_y = np.argmax(true_y, axis = 1)

accuracy = 0.0

for i in range(int(x.shape[0] / batch_size)):

tx = x[i*batch_size:(i+1)*batch_size]

tt = true_y[i*batch_size:(i+1)*batch_size]

y = self.forward(tx)

y = np.argmax(y, axis = 1)

accuracy += np.sum(y == tt)

return accuracy / x.shape[0]

def gradient(self, x, true_y):

self.loss(x, true_y)

dout = 1

dout = self.last_layer.backward(dout)

layers = list(self.layers.values())

layers.reverse()

for layer in layers:

dout = layer.backward(dout)

grads = {}

grads['W1'], grads['b1'] = self.layers['Conv1'].dW, self.layers['Conv1'].db

grads['W2'], grads['b2'] = self.layers['FC1'].dW, self.layers['FC1'].db

grads['W3'], grads['b3'] = self.layers['FC2'].dW, self.layers['FC2'].db

return grads- Hyper Parameters

epochs = 10

train_size = x_train.shape[0]

batch_size = 200

learning_rate = 0.001

current_iter = 0

iter_per_epoch = max(train_size // batch_size, 1)- 모델 생성 및 학습

train_loss_list = []

train_acc_list = []

test_acc_list = []

model = MyModel()

# Key가 잘 생성되었는지 확인

model.params.keys()

# 출력 결과

dict_keys(['W1', 'b1', 'W2', 'b2', 'W3', 'b3'])optimizer = SGD(learning_rate)

for epoch in range(epochs):

for i in range(iter_per_epoch):

batch_mask = np.random.choice(train_size, batch_size)

x_batch = x_train[batch_mask]

t_batch = t_train[batch_mask]

grads = model.gradient(x_batch, t_batch)

optimizer.update(model.params, grads)

loss = model.loss(x_batch, t_batch)

train_loss_list.append(loss)

x_train_sample, t_train_sample = x_train, t_train

x_test_sample, t_test_sample = x_test, t_test

train_acc = model.accuracy(x_train_sample, t_train_sample)

test_acc = model.accuracy(x_test_sample, t_test_sample)

train_acc_list.append(train_acc)

test_acc_list.append(test_acc)

current_iter += 1

print("Epoch: {} Train Loss: {:.4f} Train Accuracy: {:.4f} Test Accuracy: {:.4f}".format(epoch + 1, loss, train_acc, test_acc))

# 출력 결과

Epoch: 1 Train Loss: 2.1136 Train Accuracy: 0.4087 Test Accuracy: 0.3840

Epoch: 2 Train Loss: 1.5925 Train Accuracy: 0.6733 Test Accuracy: 0.5940

Epoch: 3 Train Loss: 0.9603 Train Accuracy: 0.7960 Test Accuracy: 0.7440

Epoch: 4 Train Loss: 0.5676 Train Accuracy: 0.8377 Test Accuracy: 0.7980

Epoch: 5 Train Loss: 0.4902 Train Accuracy: 0.8647 Test Accuracy: 0.8320

Epoch: 6 Train Loss: 0.4181 Train Accuracy: 0.8763 Test Accuracy: 0.8540

Epoch: 7 Train Loss: 0.3899 Train Accuracy: 0.8900 Test Accuracy: 0.8540

Epoch: 8 Train Loss: 0.3067 Train Accuracy: 0.8977 Test Accuracy: 0.8720

Epoch: 9 Train Loss: 0.3158 Train Accuracy: 0.8970 Test Accuracy: 0.8640

Epoch: 10 Train Loss: 0.2742 Train Accuracy: 0.9023 Test Accuracy: 0.8760# 정확도 시각화

markers = {'train': 'o', 'test': 's'}

x = np.arange(current_iter)

plt.plot(x, train_acc_list, marker = 'o', label = 'train', markevery = 2)

plt.plot(x, test_acc_list, marker = 's', label = 'test', markevery = 2)

plt.grid()

plt.xlabel('epochs')

plt.ylabel('accuracy')

plt.ylim(0, 1.0)

plt.legend(loc = 'lower right')

plt.show()

# 손실함수 시각화

x = np.arange(current_iter)

plt.plot(x, train_loss_list, marker = '^', label = 'train_loss', markevery = 2)

plt.grid()

plt.xlabel('epochs')

plt.ylabel('cost')

plt.ylim(0, 2.4)

plt.legend(loc = 'right')

plt.show()

- 생각보다 학습이 잘 되지 않은 이유

- 학습 데이의 수 부족

- 학습 시간 고려

- FC Layer의 노드 수가 적절했는지

- 학습률(learning rate)값이 적절했는지

- ...

- 어떠한 조건에서 가장 좋은 결과를 내는지는 값을 적절히 바면서 시도해보아야

'Python > Deep Learning' 카테고리의 다른 글

| [딥러닝 기초] RNN(순환신경망) (0) | 2023.03.27 |

|---|---|

| [딥러닝 기초] 자연어 처리 (0) | 2023.03.27 |

| [딥러닝 기초] CNN(합성곱 신경망)(1) (0) | 2023.03.23 |

| [딥러닝 기초] 딥러닝 학습 기술 (2) (0) | 2023.03.22 |

| [딥러닝 기초] 딥러닝 학습 기술 (1) (0) | 2023.03.21 |